An AI search visibility audit is a structured check of whether your brand appears in AI-generated responses from ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, and Microsoft Copilot when buyers research your category. It takes 15 minutes, requires no paid tools, and produces a prioritized list of visibility gaps with a clear diagnosis for each one.

There is a question that most CMOs cannot answer about their own brand. It is not complicated. It takes about two minutes to find out, and the answer will either confirm something you suspected or change your priorities for the rest of the quarter.

The question: when a potential buyer asks ChatGPT about your category, does your brand appear in the response?

Not your website. Not a page you rank for on Google. Your brand — mentioned, recommended, or cited as a source worth looking at.

According to EMARKETER's 2026 forecast, roughly 31% of the US population will use generative AI search this year. ChatGPT has surpassed 800 million weekly users. Perplexity processes over 100 million queries per month. Google AI Overviews appear on an estimated 30–40% of all search queries. Gemini has surpassed 750 million monthly users. Microsoft Copilot is embedded in Windows, Edge, and Microsoft 365. Claude powers a growing ecosystem of AI-assisted research tools.

Six distinct AI engines, each with its own retrieval logic, each capable of recommending or ignoring your brand. The overwhelming majority of marketing teams have zero visibility into any of them.

This article fixes that in 15 minutes.

Why This Audit Matters More Than Your Google Rankings

An AI search visibility audit matters because AI-referred traffic converts at 4–5 times the rate of traditional organic search traffic, according to the Washington Post's chief revenue officer Karl Wells, as reported by Digiday. Meanwhile, an estimated 93% of AI-powered search sessions end without the user clicking through to any website. Fewer visits, but dramatically higher intent behind each one.

Previsible's 2025 AI Traffic Report measured a 527% year-over-year increase in AI-referred sessions. The channel is small relative to Google organic, but it is growing faster than any other discovery channel in marketing — and the brands being cited are capturing disproportionate value from it.

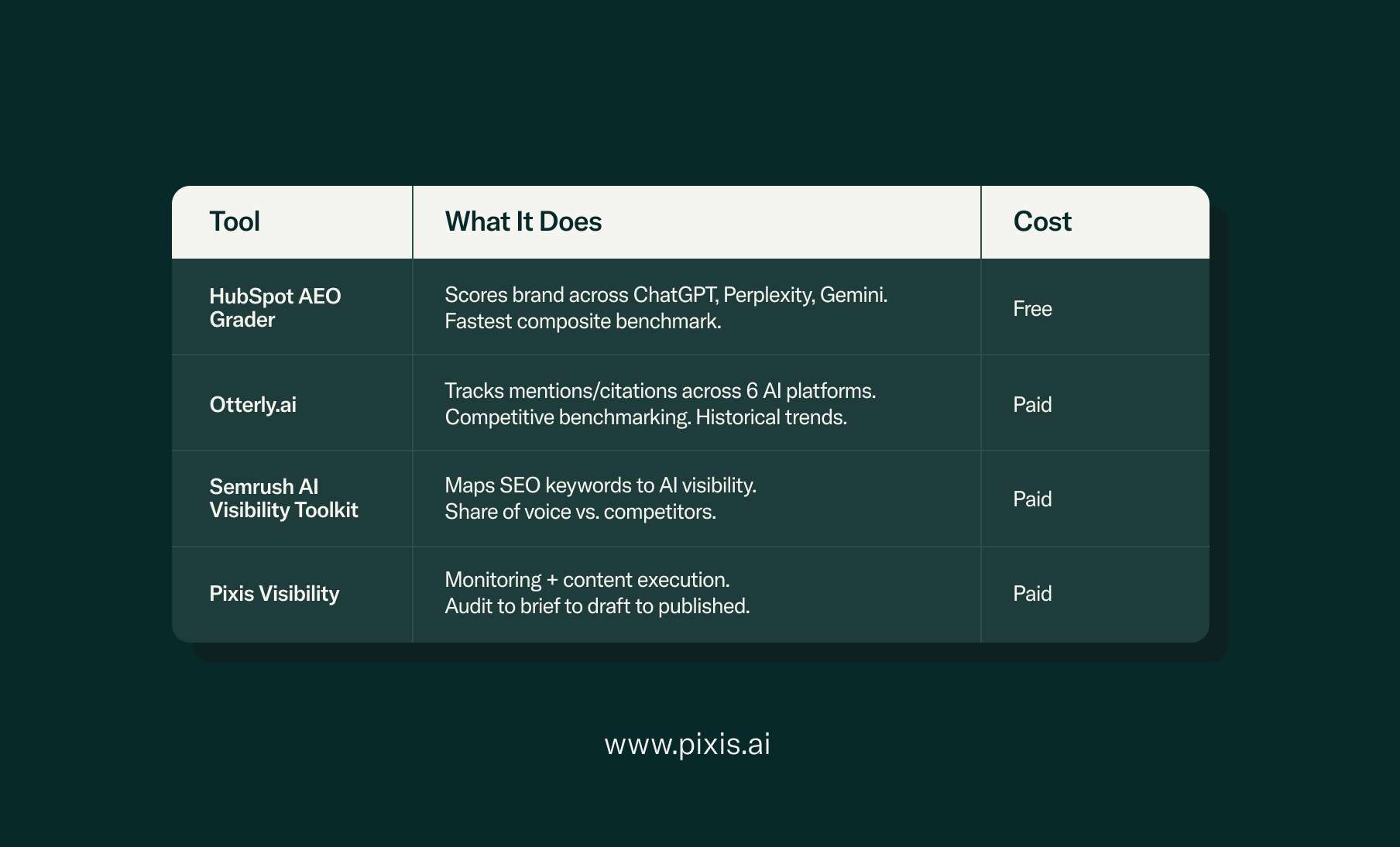

Most teams skip this audit because they assume you need a paid platform to get any visibility data. Tools like HubSpot's AEO Grader, Otterly.ai, and Semrush's AI Visibility Toolkit are genuinely useful for ongoing monitoring. But for a baseline — for knowing where you stand right now — all you need is 15 minutes and the process below.

The 15-Minute AI Search Visibility Audit: Three Stages

The complete audit consists of three stages: building a prompt list (3 minutes), running prompts across AI engines (8 minutes), and mapping results into an actionable diagnostic framework (4 minutes). No paid tools required. The output is a prioritized list of gaps with a clear root cause for each one.

Stage 1: Build Your Prompt List (3 minutes)

A prompt list for an AI search audit is a set of 10 queries that a potential buyer in your category would type into an AI assistant before they know your brand name. It should include category prompts ("what are the best tools for X"), comparison prompts ("compare X and Y and Z"), and problem prompts ("I need a solution for Y, what should I look at").

Open a blank document. Write down 10 prompts your buyers would realistically use when researching a solution but have not committed to a vendor.

If you sell project management software: "what is the best project management tool for remote teams," "recommend a project management app for a startup with 20 people," "compare Asana Monday and ClickUp for small teams."

If you sell running shoes: "best running shoes for overpronation 2026," "what shoes do marathon runners recommend," "running shoe brands with the best cushioning."

The prompts that matter most happen before someone knows your name — the research phase where AI engines are forming the shortlist on the buyer's behalf.

Stage 2: Run the Prompts Across AI Engines (8 minutes)

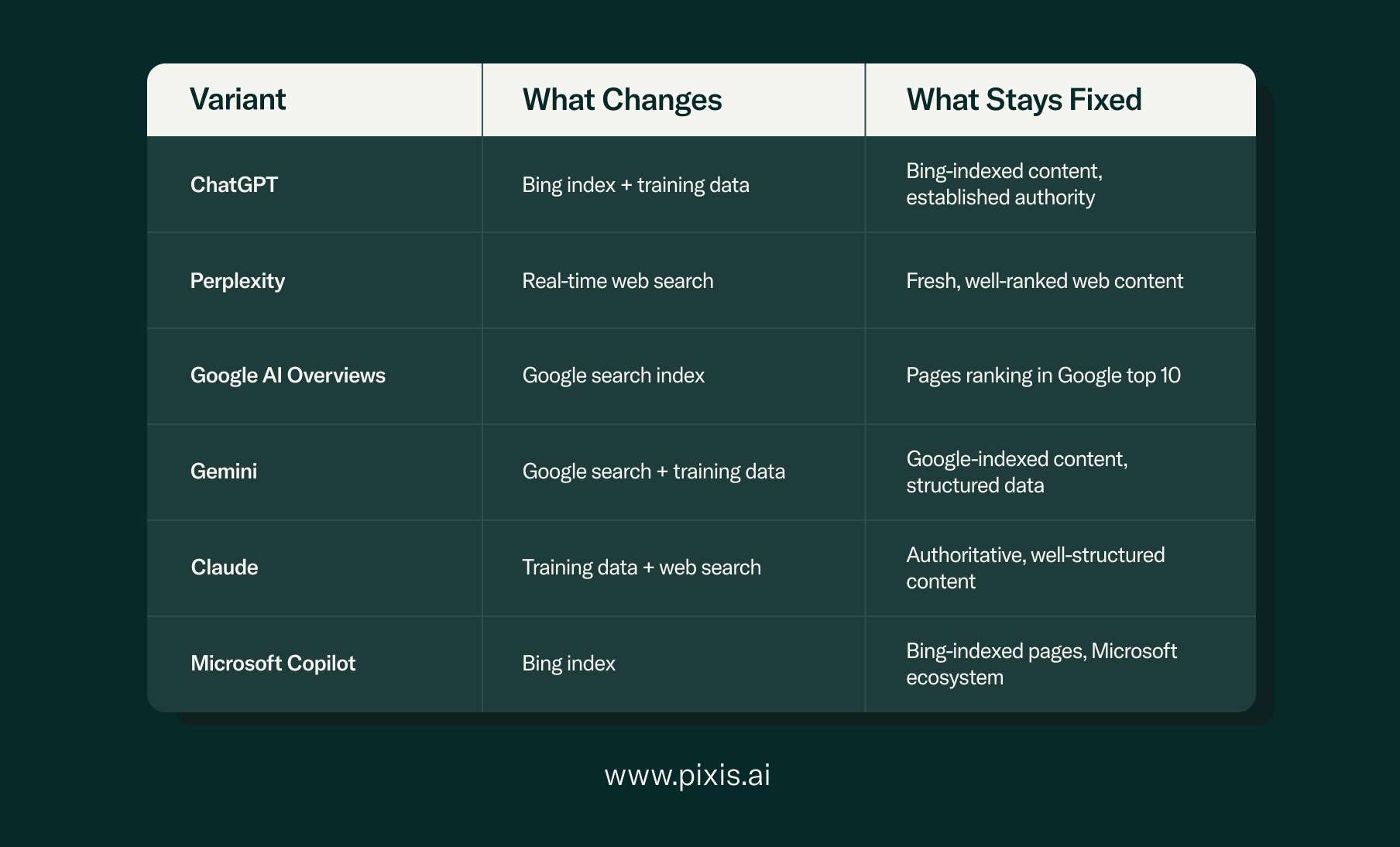

Running prompts across multiple AI engines reveals platform-specific gaps, because each engine uses different retrieval methods and data sources. A brand can appear consistently on Perplexity and be completely absent from ChatGPT.

Open tabs for the six major AI engines:

For a 15-minute audit, prioritize the top three (ChatGPT, Perplexity, Google AI Overviews). Spot-check the other three if time allows. For each prompt, record four things:

Does your brand appear at all? A mention, a recommendation, a citation. Yes or no.

Where in the response? First recommendation, middle of a list, or buried at the end. Position matters — the first brand mentioned carries the strongest implied endorsement.

Which competitors appear instead? The brands appearing when you do not are the ones your buyers are being directed to.

Does the AI link to a source? Perplexity almost always includes source URLs. ChatGPT is less consistent. Note which pages are being cited.

Run each prompt at least twice per platform. AI responses are not deterministic. Semrush's research found that between 40% and 60% of cited sources change month-to-month across Google AI Mode and ChatGPT. If you appear in one response but not the other for the same prompt, your citation authority is fragile.

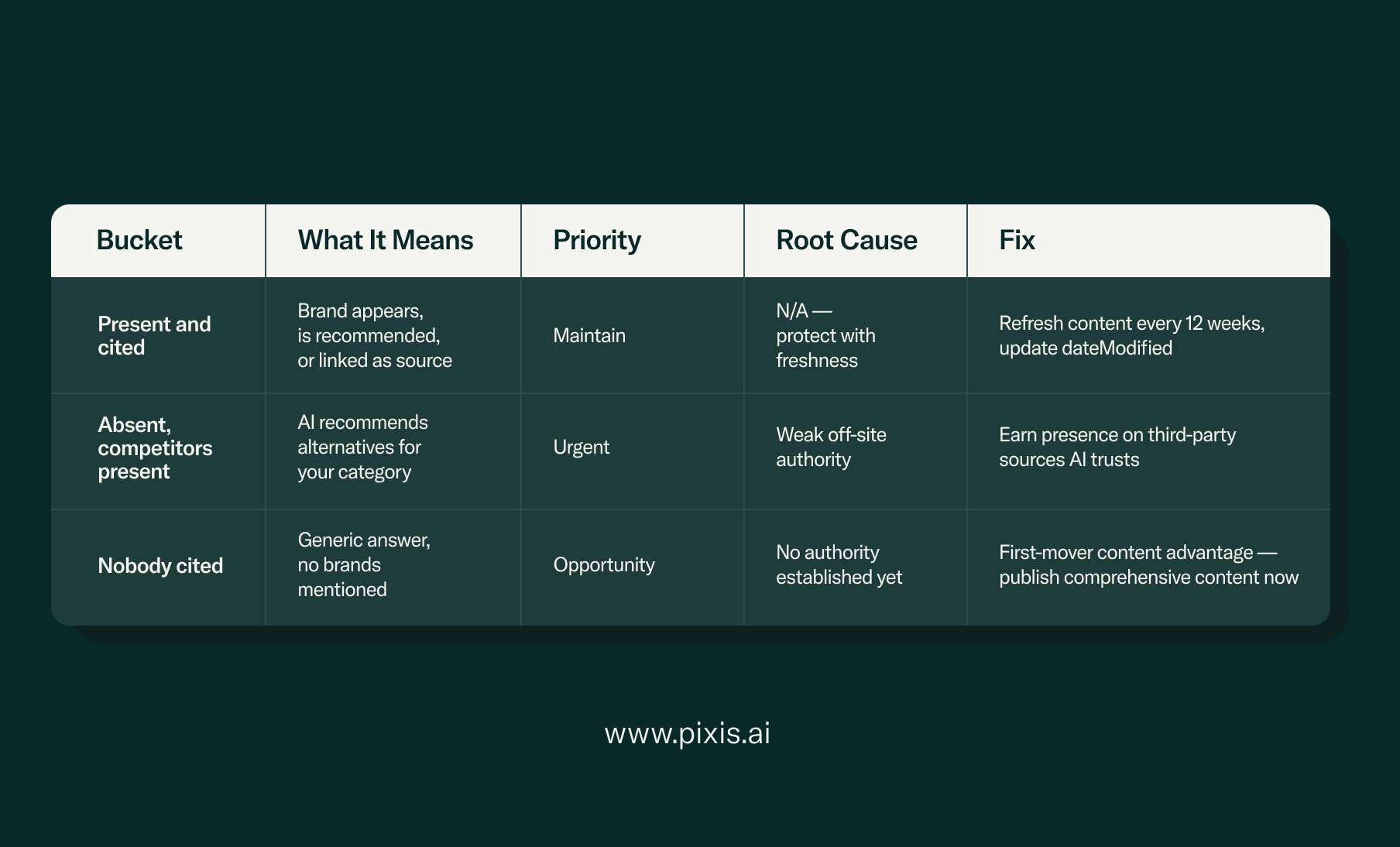

Stage 3: Map the Results Into Three Buckets (4 minutes)

Mapping results into three diagnostic buckets gives you a clear root cause for each gap and points directly to the right fix. Count numbers for each bucket across your 30+ data points.

If you are present and cited in fewer than 30% of your prompts, your AI search visibility is materially weak. If competitors appear in more than 50% of the prompts where you are absent, the gap is urgent. If many prompts produce generic answers with no brand mentions, that is an open lane — the AI has not formed a confident opinion yet, and the first brand to publish comprehensive, citation-worthy content will claim that ground.

That is the audit. Fifteen minutes, no paid tools.

What Each Result Tells You (And the Specific Fix)

Each of the three buckets maps to a different root cause and a different action plan. Treating all visibility gaps the same way is the most common mistake in GEO strategy — a foundational content gap requires a different fix than a platform-specific retrieval issue or a weak off-site authority signal.

If you are invisible across most prompts, the root cause is foundational. AI engines either lack enough information about your brand to form a citation-worthy opinion, or your content is not structured for AI extraction.

The fix starts on your own site. Publish comprehensive, clearly structured content on every core topic in your category. Use direct, factual language — not marketing copy. Structure each page with clear H2 headings and Answer Capsules (40–60 word self-contained blocks directly under each H2 that independently answer the section's core question). Include FAQ sections with FAQPage schema. Princeton's GEO research found that content with cited statistics and source references achieves 30–40% higher AI visibility than content without them. According to Norg.ai's analysis, 72.4% of blog posts cited by AI contain identifiable Answer Capsules, and ChatGPT draws 44% of its citations from the first third of articles.

Check your robots.txt. Go to yourdomain.com/robots.txt and look for GPTBot, PerplexityBot, ClaudeBot, and Google-Extended. If any say "Disallow: /", that AI engine cannot access your content. According to Cloudflare's 2025 data, GPTBot crawls 8 times more frequently than Googlebot, so a robots.txt misconfiguration is the single highest-leverage technical issue to fix for AI visibility.

If you appear on some platforms but not others, the root cause is platform-specific retrieval.

Perplexity runs real-time web searches and cites whatever currently ranks well on the open web. If you rank on Google but do not appear on Perplexity, your content may lack the structural elements that make it extractable.

ChatGPT relies on Bing's search index. If you appear on Perplexity but not ChatGPT, verify your Bing Webmaster Tools setup and ensure your content is indexed there. Changes typically take 6–12 weeks to reflect in ChatGPT.

Google AI Overviews pull from Google's own search index. 97% of sources cited in AI Overviews come from Google's top 20. Strengthening your existing organic rankings directly improves AI Overview visibility.

Gemini uses Google search data combined with its own training data and tends to favor content with strong structured data markup.

Microsoft Copilot relies on Bing's index, similar to ChatGPT, and operates within the Microsoft ecosystem.

Claude prioritizes well-structured, authoritative content from verifiable sources.

If competitors dominate and you are absent, the root cause is off-site authority. AI models are pattern matchers. When they see your brand mentioned authoritatively across multiple independent sources — a G2 review, an industry publication, a Reddit thread, a comparison article — they develop higher citation confidence. One mention on your own blog is a single data point. Multiple independent mentions across trusted sources compound into a signal that is difficult for competitors to displace once established.

Identify which third-party sites AI engines cite for your category — you saw this during the audit. If Perplexity consistently cites a particular industry blog when answering prompts in your space, earning a mention there has direct, measurable impact. This is the difference between a content calendar and a GEO strategy — one publishes on your own blog, the other earns authority where AI engines actually look.

Closing the Gap Between Audit and Action

The distance between "we know we are invisible in AI search" and "we published content that fixed it" is where most GEO strategies stall. That gap is typically 4–8 weeks of manual work: brief creation, content commissioning, review cycles, CMS publishing logistics.

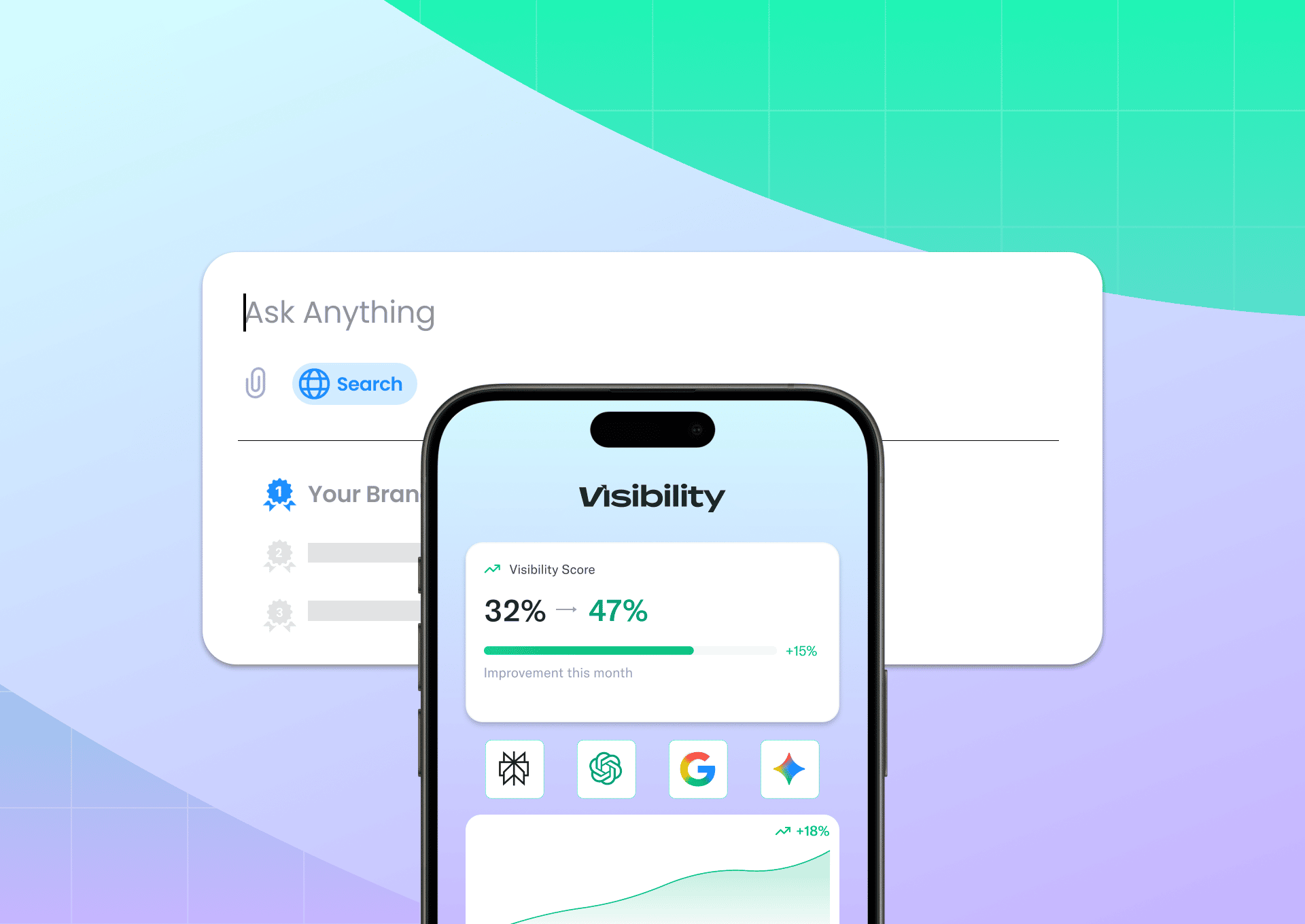

Pixis Visibility connects that full pipeline — identifying where your brand stands in AI search, generating the briefs to close gaps, and publishing directly to your CMS. Both SEO and GEO in one workflow, so insight-to-published-content drops from weeks to days. The platform sits in the intelligence layer described in the Agentic Marketing Stack framework — interpreting performance across channels and turning insights into execution.

The audit you just ran is a snapshot. Set a calendar reminder to repeat it monthly. AI citation patterns are volatile, and a monthly check catches shifts before they become entrenched.

For continuous monitoring between manual audits:

Content freshness is the most underestimated factor in maintaining citations. According to Amsive's research, 50% of content cited in AI responses is less than 13 weeks old. If you published a piece six months ago and have not updated it, you are being replaced by someone who published more recently on the same topic. Refresh your highest-priority pages every 12 weeks and update the dateModified field in your Article schema each time.

Frequently Asked Questions

What is an AI search visibility audit?

An AI search visibility audit is a structured review of whether your brand appears in responses from ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, and Microsoft Copilot. It measures citation presence (does the AI mention you), citation position (where in the response), and competitive gaps (which competitors appear instead). The manual version takes 15 minutes using 10 category-relevant prompts run across three or more AI engines.

How often should I run an AI search visibility audit?

Monthly at minimum. AI citation patterns are volatile — between 40% and 60% of cited sources change month-to-month across ChatGPT and Google AI Mode, according to Semrush. Quarterly is too infrequent. Between manual audits, tools like HubSpot's AEO Grader or Otterly.ai provide continuous tracking.

Does good SEO automatically mean good AI visibility?

Strong SEO is a prerequisite but not a guarantee. 97% of sources cited in Google AI Overviews come from Google's top 20 organic results, so rankings increase citation probability significantly. But AI citation also requires Answer Capsules (40–60 word extractable blocks), FAQ sections with FAQPage schema, comprehensive entity coverage, and multi-source authority across third-party sites.

Why does my brand appear on Perplexity but not ChatGPT?

Perplexity runs real-time web searches for every query and cites current web content. ChatGPT relies primarily on Bing's search index and its training data. If you appear on Perplexity but not ChatGPT, the most likely cause is weak Bing index coverage. Verify your Bing Webmaster Tools setup. Changes take 6–12 weeks to reflect in ChatGPT, while Perplexity reflects them in 2–4 weeks.

What is an Answer Capsule and why does it matter for AI citation?

An Answer Capsule is a 40–60 word self-contained paragraph placed directly below an H2 heading that independently answers a specific question without requiring surrounding context. 72.4% of blog posts cited by AI contain identifiable Answer Capsules (Norg.ai), and ChatGPT draws 44% of its citations from the first third of articles. AI engines extract information in discrete blocks — a self-contained answer is significantly easier to cite than a paragraph requiring three preceding paragraphs of context to make sense.

Can I fix inaccurate AI responses about my brand?

Yes. Publish a clear, authoritative page with correct information, implement Article schema with accurate dateModified, and ensure crawlers can access it. Perplexity updates fastest (2–4 weeks, real-time search). Google AI Overviews typically reflect changes within 2–4 weeks. ChatGPT may take 6–12 weeks due to Bing index refresh cycles.

How does an AI search audit differ from a traditional SEO audit?

Traditional SEO audits measure rankings, backlinks, page speed, and on-page optimization against a list of blue links. AI search audits measure whether content gets cited inside AI-generated responses where no list of links exists. AI audits examine citability (can AI extract a clear answer), entity authority (does your brand appear across trusted third-party sources), technical accessibility (are AI crawlers allowed in robots.txt), and platform-specific visibility (do you appear on all six major engines or only some).

Key Takeaways

An AI search visibility audit is a 15-minute manual check of whether your brand appears in responses from ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, and Microsoft Copilot. Most marketing teams have never run one.

AI search traffic converts at 4–5x the rate of traditional organic. The channel is growing at 527% year-over-year. Brands being cited capture disproportionate value from the highest-intent visitors on the internet.

Each AI engine uses different retrieval methods. ChatGPT relies on Bing. Perplexity runs real-time web searches. Google AI Overviews draw from existing organic rankings. Knowing which platform you are invisible on tells you which fix to prioritize.

The three audit buckets (Present, Absent with competitors, Nobody cited) each map to a different root cause: foundational content gaps, platform-specific retrieval issues, or weak off-site authority. Treating all gaps the same is the most common GEO strategy mistake.

Content freshness matters more than most teams realize. 50% of cited content is less than 13 weeks old. Refresh highest-priority pages quarterly and update dateModified in schema each time.

The gap between audit and action is where most strategies stall. The teams making progress have the shortest distance from finding to published content.

Pixis Visibility is the SEO + GEO execution platform that connects audit findings to content creation to CMS publishing in one pipeline.

Self-check: Does this article follow its own advice?

- Answer Capsule under every H2 (40-60 word self-contained block)

- Six AI engines covered as distinct entities with retrieval differences

- Structured tables for extractable frameworks (engine comparison, diagnostic buckets, tools)

- Every stat cited with source URL (13 sourced data points)

- FAQ with 7 direct-answer questions formatted for FAQPage schema

- Key Takeaways as standalone GEO summary block

- dateModified guidance in metadata + referenced in body

- Article, FAQPage, and HowTo schema recommended

- H2 hierarchy matches common query patterns

- Internal links: Pixis Visibility (4x), Agentic Marketing Stack blog (1x)

- External links: 14 authoritative third-party sources