Comparing ROAS across Meta, Google, and TikTok is one of the most common — and most misleading — exercises in performance marketing. The numbers from each platform look like they're measuring the same thing. They're not. Each platform uses different attribution windows, counts conversions differently, and serves a different role in the purchase journey. A direct comparison without accounting for those differences doesn't tell you which platform is working. It tells you which platform is best at claiming credit.

Here's the situation most performance teams find themselves in: Meta reports a 3.2x ROAS. Google reports 5.1x. TikTok reports 1.6x. The instinct is to cut TikTok, double down on Google, and treat Meta as a middle-ground channel. But when you pause TikTok, branded search volume on Google drops and Meta retargeting pools shrink. The channel looked inefficient in isolation. It was doing more work than the number suggested.

This is the core problem with cross-platform ROAS comparison: each platform is an advocate for itself. Meta wants to prove Meta works. Google wants to prove Google works. TikTok wants to prove TikTok works. When a customer touches multiple platforms before converting, all three will try to claim credit — and the sum of their reported conversions often exceeds your actual sales by 50 to 200%.

This article explains why platform-reported ROAS figures aren't directly comparable, what a fair cross-platform comparison actually requires, and how to build a measurement framework that gives you an accurate read on where your budget is earning its keep.

The Benchmark Problem: What Each Platform's ROAS Actually Reflects

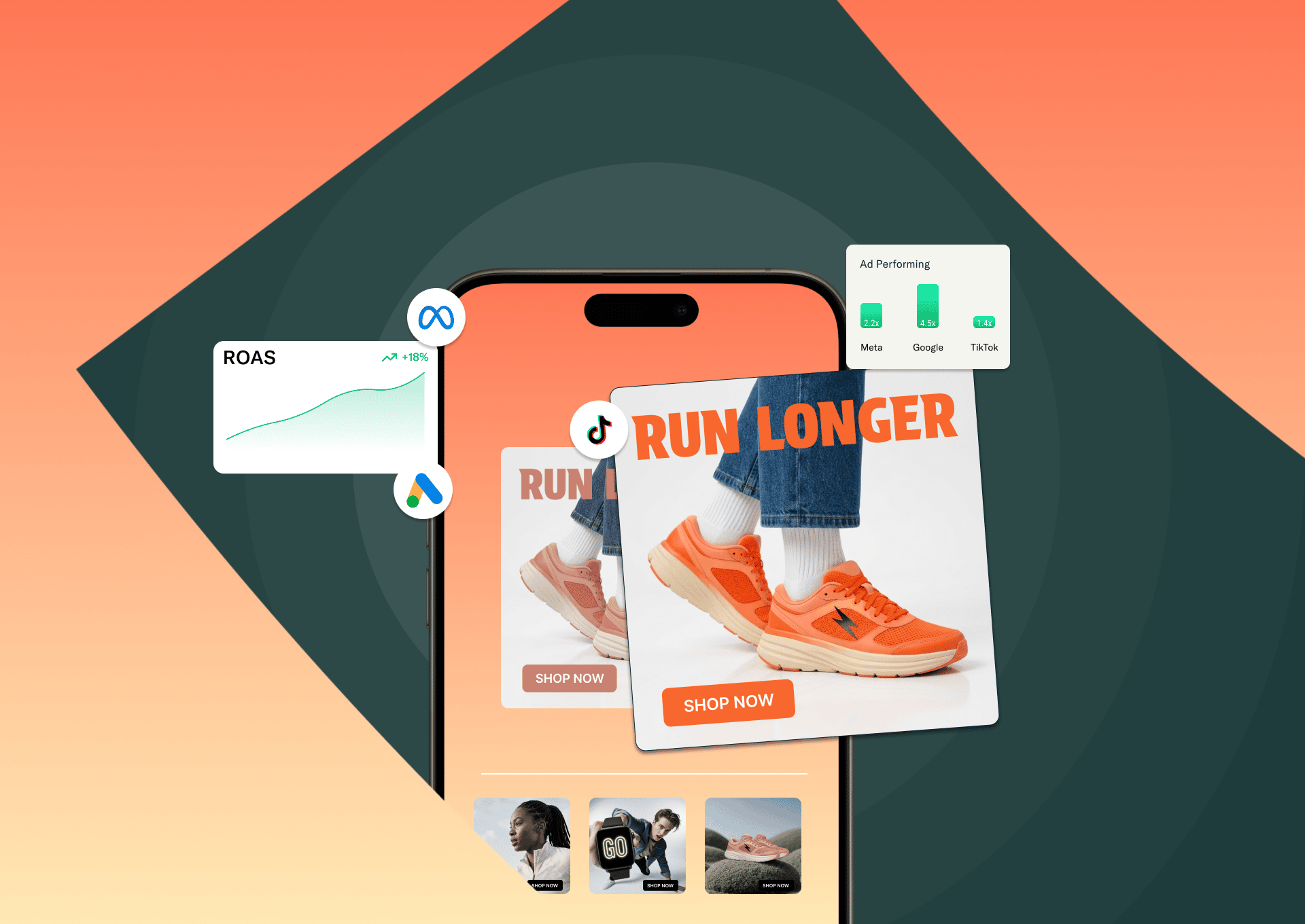

Before making any decisions based on platform numbers, it's worth understanding what those numbers are actually measuring — because Google's 4.5x and TikTok's 1.4x aren't different grades on the same test. They're different tests entirely.

Across 2025 data, median ROAS by platform breaks down roughly as follows: Google Ads at approximately 3.3–4.5x, Meta at 2.2–2.8x, and TikTok at 1.4–1.6x. Search campaigns on Google run higher because they capture people already in buying mode — the query is the signal of intent. TikTok sits lower because it reaches people who aren't yet looking to buy. The benchmark reflects the position in the funnel, not the value of the channel.

Google Search's advantage is structural: it harvests demand that already exists. Meta and TikTok create that demand upstream. Comparing their ROAS figures side by side without accounting for funnel position is like comparing a closer's conversion rate to a prospector's and concluding the closer is more valuable. This distinction — between channels that harvest intent and channels that generate it — is central to understanding why more ads on a single channel don't improve ROAS when the rest of the funnel isn't working.

Why Platform-Reported ROAS Isn't Comparable: The Attribution Window Problem

The most significant reason you can't compare ROAS across platforms directly is attribution windows — and each platform has set its defaults in ways that favor its own performance.

Meta currently defaults to a 7-day click and 1-day view attribution window. Google Ads defaults to 30 days for Search campaigns, with options to extend to 90 days. TikTok offers 1, 7, or 28-day click windows, with an "engaged view" model that counts conversions from users who watched at least 6 seconds of your ad, then converted within 1 to 7 days afterward — capturing 30 to 40% of conversions that last-click models miss entirely. If you're running Meta on a 7-day window and Google on a 30-day window, you cannot directly compare their ROAS. You're measuring different time horizons and calling it an apples-to-apples comparison.

The practical consequence: if a customer clicks a TikTok ad, then clicks a Meta ad three days later, then converts via a Google branded search on day five, all three platforms may claim that conversion. Your actual sale happened once. Your reported conversions across platforms may show it three times.

This over-attribution problem is why many teams find that if they add up all reported conversions across their platforms, the total significantly exceeds their actual revenue. The platforms aren't lying — they're each measuring within their own rules. But those rules weren't designed to give you an accurate cross-platform view. They were designed to make each platform's ads look as effective as possible.

What Each Platform Is Actually Good For

Fair comparison starts with understanding what role each platform plays in a customer's journey — because expecting the same ROAS from all three is like expecting a billboard and a checkout page to perform the same way.

Google: intent capture at the bottom of the funnel

Google Search is the highest-intent ad environment available. When someone types a query, they're already in the market. Search ads sit at the moment of decision. That's why Google Search ROAS benchmarks run 4.5–6x+ for well-optimized campaigns — they're converting people who have already decided to buy and are choosing between options. Google Shopping campaigns sit slightly lower at 5.0–6.5x, Display at 2.5–4.0x, and YouTube at 2.0–3.5x — reflecting the shift from high to lower intent as you move away from search.

The risk with over-indexing on Google's reported ROAS: a significant portion of Google's branded search conversions are people who were already going to buy from you. They'd heard about you from somewhere else — a Meta ad, a TikTok video, a friend's recommendation — and then Googled your brand name. Google claims the conversion. The other channel did the work of building the consideration.

Meta: the mid-funnel engine that looks like a bottom-funnel channel

Meta's median ROAS across ecommerce sits at approximately 2.2x for new customer acquisition, rising to 3.6x for retargeting campaigns. Advantage+ Shopping campaigns with broad targeting are outperforming manually targeted campaigns by 15–25% in ROAS, according to Meta's own Q3 2025 data — meaning the platform's automation is increasingly doing what human targeting used to do, and doing it better.

Meta's real strength is audience building and retargeting. It can reach people who fit your customer profile before they know they need you, and then follow up with them across the consideration window. The ROAS on cold prospecting campaigns will look lower than Google because the purchase intent isn't there yet. That doesn't mean those campaigns aren't working — it means you're measuring the beginning of a journey, not the end of one.

TikTok: discovery and demand generation with complex attribution

TikTok's median ROAS sits at 1.4–1.6x across most categories, with beauty outperforming significantly at 3.5x. Spark Ads — which boost existing organic content as paid promotions — deliver 30–50% lower CPAs than standard In-Feed ads because they carry the social proof (likes, comments, shares) from their organic performance.

TikTok's attribution challenge is significant. The platform's last-click model undervalues its actual contribution because TikTok users who see an ad rarely convert immediately — they save the product, mention it to someone, or search for it on Google later. Multiple brands have reported that pausing TikTok spend causes drops in branded search volume and shrinks Meta retargeting pools — evidence that TikTok is generating the top-of-funnel awareness that feeds other channels. That impact is real. It's just invisible in the ROAS column.

The Right Framework for Cross-Platform ROAS Comparison

If platform-reported ROAS isn't reliable for cross-platform comparison, what is? The answer isn't one metric — it's a layered measurement approach that gives you confidence at different levels of the business.

Blended ROAS and Marketing Efficiency Ratio (MER)

Blended ROAS is total revenue divided by total ad spend across all channels — not what any individual platform reports, but what your business actually returned for every dollar spent in aggregate. For DTC ecommerce brands, a blended ROAS of 3x or above is generally considered strong, though margin structure can move that target significantly in either direction.

Marketing Efficiency Ratio (MER) takes this further: total revenue divided by total marketing spend, including creative production, agency fees, and influencer costs — not just paid media. MER sidesteps attribution entirely by asking a simpler question: is the marketing operation as a whole generating sufficient return? When attribution is unreliable, MER gives you a ground-truth check that can't be gamed by platform reporting logic.

Use blended ROAS and MER as your top-level health metrics. Use platform-reported ROAS as directional signals within each channel, not as the basis for cross-platform budget allocation decisions.

Incrementality testing

Incrementality testing measures the lift a channel produces — the additional conversions that wouldn't have happened if that channel hadn't run. It's the most accurate way to understand a channel's true contribution, and it's also the most resource-intensive to set up properly.

The simplest version: pause a channel for a defined period, hold all other variables constant, and measure what happens to total revenue. More sophisticated versions use holdout groups — audiences who don't see the ads — to compare against audiences who do. The difference in conversion rate between the two groups is the channel's incremental lift.

Incrementality testing often reveals that channels with low reported ROAS are contributing more than the number suggests, and that channels with high reported ROAS are partly claiming credit for conversions that would have happened anyway. It's the antidote to the attribution war between platforms.

Post-purchase surveys

One of the most underused data sources in cross-platform attribution is the customer themselves. A simple post-purchase survey asking "How did you first hear about us?" consistently surfaces attribution patterns that no platform data captures — particularly for dark social channels like private recommendations, podcasts, and word of mouth.

The data from post-purchase surveys won't be statistically perfect, but it provides directional intelligence about which channels are generating the initial awareness that eventually leads to a purchase. Combined with platform data, it gives you a more complete picture than either source alone. This is the same attribution logic we explored in the context of paid search in our paid search lead generation guide — the principle that platform attribution never captures the full story of how a customer found you.

Setting Channel-Specific ROAS Targets (Not One Universal Number)

One of the most common ROAS mistakes is applying the same target across all three platforms. It produces bad decisions in both directions: cutting TikTok because it can't match Google's number, or scaling Meta past the point where it's generating incremental returns because it looks efficient on paper.

A DTC brand doing $2M–$10M in annual revenue typically runs 50–60% of spend on Meta, 25–35% on Google, and 10–20% on TikTok. Prospecting campaigns across platforms typically target 2.0–3.0x ROAS, retargeting campaigns 6.0–10.0x, and TikTok-specific campaigns 1.5x as a floor — because TikTok's contribution to the overall funnel justifies a lower direct-response number when the blended performance is strong.

Build your channel targets around your own cost structure and payback window, not around what the internet says a good ROAS is. The benchmark exists to tell you where the average is. Your business is not the average.

The Seasonal Dimension: ROAS Isn't Static

ROAS fluctuates significantly across the calendar, and that fluctuation isn't uniform across platforms. Meta's ROAS during Black Friday and Cyber Monday increased 17% in 2024, with conversion rates surging 32% — but CPMs also rose sharply, meaning the efficiency gains were partly offset by higher costs. Google Shopping ROAS tends to peak during Q4 and dip in January as post-holiday intent collapses. TikTok's seasonal patterns are more erratic, driven more by content trends than by purchase intent cycles.

The practical implication: ROAS benchmarks pulled from annual averages won't reflect what you should expect in any given month. A 2.5x Meta ROAS in Q1 might be excellent. The same number in Q4 might indicate something has broken. Understanding seasonal baselines for each channel separately is as important as understanding the cross-platform comparison.

Where Prism Fits: Running the Comparison Without the Manual Overhead

This is the specific problem Prism is built to solve. Prism includes a pre-built Cross-Platform ROAS Comparison template — available from the Template Gallery under the Performance category — that pulls live data from connected Meta, Google, and TikTok ad accounts and aggregates them into a single unified view. Rather than toggling between three separate dashboards and trying to reconcile figures calculated under different rules, you run one query and get a side-by-side breakdown of ROAS, spend, conversions, and CPA across all three platforms, with week-over-week comparisons built in.

Each platform has a dedicated agent handling the data pull: the Meta agent covers Facebook and Instagram, the Google agent covers Search, YouTube, and Display, and the TikTok Ads agent covers TikTok advertising. The Cross-Platform template pulls from all three simultaneously. The practical output is what most teams spend hours assembling manually: a single view of which platform is returning most efficiently, where spend is distributed, and how performance has shifted over the comparison period.

The more powerful use case is when you add your own data on top. Prism lets you connect a Google Sheet containing your profit margins or customer LTV figures alongside the platform data — which moves the analysis from platform-reported ROAS to actual return on investment. That distinction matters: a 3.2x Meta ROAS looks different when your margin on that product is 60% than when it's 20%. With your margin data connected, Prism can run that calculation in the same query using the template as the base and your own numbers as the context.

The risk of waiting for a weekly report to surface a ROAS drift is that by the time it lands, the budget has already moved in the wrong direction for days. That's the argument for real-time cross-platform monitoring — not as a dashboard you check when you remember to, but as an intelligence layer that surfaces the signal before it becomes an expensive miss. That's the case we made in Real-Time Budget Optimization: How Teams Prevent ROAS Drift Before It Shows Up in the Weekly Report.

The Creative Variable: Why the Same Budget Returns Differently on Each Platform

Cross-platform ROAS comparison often focuses on attribution and budget allocation, but there's a third variable that's equally significant: creative format. The same product, the same offer, and the same budget will return very differently across platforms not just because of audience intent, but because of how well the creative fits the native context.

Google Search ads are text-based. Creative quality in the traditional sense doesn't apply — what matters is relevance to search intent, landing page quality, and offer competitiveness. Meta rewards visual creative that stops the scroll and targets correctly. TikTok rewards native, entertainment-first video that doesn't feel like an ad. UGC-style creative on TikTok outperforms polished brand creative by 2–3x in conversion rate, according to TikTok's Creative Center data — which means that a brand running the same creative across Meta and TikTok is almost certainly underperforming on one of them.

Creative is increasingly the primary performance variable on social platforms — not targeting, not bidding logic. What differentiates accounts on Meta and TikTok now is the quality and velocity of creative input, not access to tools or audience data. We covered the mechanics of this in detail in How High-Performance Teams Beat Creative Fatigue Without Expanding Studio Headcount. The connection to ROAS is direct: poor creative fit suppresses ROAS, and the platform gets blamed for underperforming when the real issue is that the ad didn't belong there.

ROAS Is a Signal, Not a Scoreboard

The brands making the best budget decisions across Meta, Google, and TikTok in 2026 are not the ones with the highest platform-reported ROAS. They're the ones who understand what each platform's ROAS number actually means — and what it's hiding.

Google's high ROAS is partly real, partly a function of harvesting demand that other channels created. Meta's mid-range ROAS reflects its dual role as both a discovery and retargeting engine. TikTok's lower ROAS understates its contribution to the funnel because most of its influence happens before the last click.

The right way to read cross-platform ROAS is not as a ranking system but as a diagnostic tool: each platform's number tells you something about how it's operating within your funnel, not whether it deserves to be in the budget at all.

The question worth asking is not "which platform has the best ROAS?" It's "do I have a measurement framework that can tell me what's actually driving my revenue, and what would happen if I changed any one variable in the mix?" That's the question a blended ROAS, an MER, and the occasional incrementality test are designed to answer.

And it's the question Prism's Cross-Platform ROAS Comparison template is designed to make answerable without a manual data pull. Connect your Meta, Google, and TikTok accounts, add your margin data in a Google Sheet, run the query. What you get back isn't just a side-by-side ROAS table — it's a read on what your ad spend is actually returning against your real cost structure, broken out by platform, by week, and by the metrics that actually move your business. Platform-reported ROAS is where the conversation starts. That's where it ends.

Frequently Asked Questions

Can you directly compare ROAS across Meta, Google, and TikTok?

Not without adjusting for attribution windows and funnel position. Each platform's default settings measure different time horizons, and because those windows overlap, the same conversion can be claimed by multiple platforms simultaneously. A unified attribution layer, blended ROAS calculation, or first-party measurement approach is required before cross-platform figures mean anything reliable.

What is a good ROAS for Meta ads in 2026?

For Meta, a median ROAS of 2.2x for cold prospecting and 3.6x for retargeting campaigns reflects typical 2025 performance across ecommerce brands. For impulse-purchase categories like apparel and beauty, 3.0x or above is considered strong on cold traffic. For higher-ticket items like furniture or electronics, 1.8–2.5x is more realistic given the longer consideration window. Meta's Advantage+ Shopping campaigns are outperforming manually targeted campaigns by 15–25% in ROAS as of Q3 2025, making campaign structure increasingly important.

What is a good ROAS for Google Ads in 2026?

Google Search typically runs the highest ROAS of the three platforms because it captures people who are already in buying mode. Strong Search campaigns benchmark at 4.5x median, with top performers reaching 6.0–8.0x on branded and high-intent keywords. Shopping campaigns run slightly lower. Display and YouTube sit closer to 2.0–3.5x, reflecting their role earlier in the funnel. The key caveat: a portion of Google's branded search conversions represent people who were already going to buy and simply used Google to navigate — meaning Google's reported ROAS often includes credit that belongs to upstream channels.

What is a good ROAS for TikTok ads in 2026?

TikTok's median ROAS sits at 1.4–1.6x across most ecommerce categories, with beauty outperforming at approximately 3.5x. A floor of 1.5x is a reasonable TikTok-specific target for cold traffic DTC campaigns. Spark Ads — which boost existing organic content — typically deliver 30–50% lower CPAs than standard In-Feed ads. The number will always look lower than Meta or Google because TikTok operates earlier in the funnel. The right question isn't whether TikTok's ROAS matches Google's — it's whether TikTok's presence is growing the pool of people who eventually convert on other channels.

What is blended ROAS and why does it matter for cross-platform comparison?

Blended ROAS is total revenue divided by total ad spend across all channels. It matters because it sidesteps the attribution conflict entirely — rather than asking which platform deserves credit for a conversion, it asks whether the combined spend is generating sufficient return. For DTC ecommerce, 3x or above is generally considered strong, though your margin structure and LTV will shift that target.

What is MER and how does it differ from ROAS?

Marketing Efficiency Ratio (MER) is total revenue divided by total marketing spend — including not just paid media but creative production costs, agency fees, influencer fees, and any other marketing expenditure. Where ROAS measures the return on ad spend specifically, MER measures the return on the entire marketing investment. MER is particularly useful when attribution is unreliable or contested across platforms, because it bypasses the question of which channel gets credit and asks instead whether marketing as a whole is generating a sufficient return. Many performance teams now use MER alongside blended ROAS as a ground-truth health metric.

How do attribution windows affect cross-platform ROAS comparison?

Attribution windows determine how long after an ad interaction a conversion is credited to that ad. Because Meta, Google, and TikTok use different defaults — and those windows overlap — the same purchase can appear in all three platforms' reports simultaneously. The customer converts once. The platforms each count it. Aligning your attribution settings across platforms, or using a first-party measurement layer that applies consistent rules, is the only way to make the numbers genuinely comparable.

Key Takeaways

Platform-reported ROAS figures are not directly comparable. Meta, Google, and TikTok use different attribution windows and models, meaning the same conversion can be claimed by multiple platforms simultaneously. Cross-platform comparison requires a unified attribution layer or blended measurement approach.

Each platform serves a different funnel role. Google Search captures high-intent demand at 4.5x median ROAS, Meta builds and retargets at 2.2–3.6x, and TikTok generates top-of-funnel awareness at 1.4–1.6x. Expecting the same ROAS from all three is a category error.

Blended ROAS and MER are the most reliable top-level health metrics. They bypass the attribution conflict and give you a ground-truth view of whether the combined marketing investment is generating sufficient return.

Set channel-specific ROAS targets based on funnel stage, not one universal number. Prospecting campaigns typically target 2.0–3.0x, retargeting 6.0–10.0x, and TikTok cold traffic a floor of approximately 1.5x.

Creative fit is a ROAS variable. Poor creative match to platform context suppresses ROAS in ways that look like a platform problem but are a creative problem. Platform-specific creative — UGC-style for TikTok, intent-matched for Google, scroll-stopping for Meta — is not optional for accurate cross-platform comparison.