You already know what your weekly performance review should cover. Pacing against plan. ROAS drift across campaigns. CPC movement. Creative fatigue signals. Budget concentration risk. Audience overlap. Automated rule behavior.

The problem isn't knowing what to check. The problem is actually checking it — every week, on time, under the same framework, without someone having to remember to pull the data, format the comparison, and send the summary before the next meeting.

Most performance teams are losing ground not because their strategy is wrong, but because their monitoring is inconsistent. The strategy meeting happens. The campaign launches. And then the operational review layer starts to fray — skipped during a busy week, rushed before a leadership call, or run differently depending on who had capacity that day.

The Real Cost of Manual Recurring Analysis

Performance marketing in 2026 moves fast. Budgets shift daily. Creative fatigue can show up within 72 hours of a new ad going live. CPC volatility can meaningfully alter your acquisition efficiency over a single weekend. Attribution signals fragment across Meta, Google, TikTok, and programmatic channels simultaneously. Competitive responses — price changes, new creative, shifted targeting — happen in hours, not weeks.

And yet, most teams are still running their recurring reviews through a process that looks something like this:

Someone sets a calendar reminder. When it fires, they open five tabs, pull platform exports, paste data into a spreadsheet, build a comparison against last week, interpret the deltas, and write a summary — all before their 10am meeting. If they're out sick, the review doesn't happen. If they're heads-down on a launch, it gets pushed. If they leave the company, the process leaves with them.

The deeper problem isn't inefficiency. It's invisible inconsistency. When performance reviews depend on memory and manual effort, three things reliably happen:

Reviews get skipped during high-pressure cycles. The weeks when your campaigns need the most scrutiny — big launches, high-spend periods, Q4 — are precisely when analyst time is most constrained and the review is most likely to get deprioritized.

Insights arrive late. A ROAS deviation that appeared on Tuesday doesn't surface until Friday's review. By then, you've already spent two more days at the wrong efficiency level. Budget has leaked. Decisions have been made on stale information.

Standards drift across the team. One analyst frames weekly performance as a trailing 7-day comparison. Another uses the prior week as a fixed anchor. One leads with ROAS. Another starts with CPC trends. The outputs look different, read differently, and can't be meaningfully stacked to build trend visibility over time.

Over months, this variability compounds. Performance volatility increases — not because the strategy is fundamentally broken, but because the monitoring beneath it isn't holding.

Why Analytical Consistency Beats Analytical Brilliance

The performance marketing edge rarely comes from a single brilliant insight that changes everything. It comes from catching ROAS drift early, before it erodes a month of efficiency. It comes from spotting creative fatigue at the first signal, before CPA climbs and you're scrambling to explain it to leadership. It comes from identifying budget misallocation in week two of a campaign, not in the post-mortem. It comes from correcting pacing before month-end damage is locked in.

None of this requires brilliance. It requires a system that runs the same analysis reliably, at fixed intervals, under the same structure — whether your best analyst is available or not, whether the week is busy or slow, whether the team is scaling or firefighting.

Here's another risk that compounds quietly: interpretive drift.

Two analysts reviewing the same account in the same week can surface completely different narratives — and both can be technically correct. One looks at a 7-day window. The other uses 14 days. One benchmarks against last week. The other uses a 4-week rolling average. One flags a 12% CPC increase as concerning. The other calls it within normal variance.

None of these choices are individually wrong. But across a team reviewing accounts on rotating schedules, the inconsistency makes it nearly impossible to trend meaningfully or build institutional memory. You can't confidently say "performance is declining" if the framework for measuring performance keeps shifting underneath the data.

The question worth sitting with: does your team have a system that holds? Or does it have a calendar reminder and the hope that someone has time.

Introducing Prism by Pixis — and Where Scheduled Workflows Fit In

Prism is Pixis' AI-powered ad campaign manager, trained on over 3 billion data points and built to turn prompts into answers, insights, and optimization — through a conversational interface. No dashboard diving. No manual exports. Just questions asked in plain English, and analysis that actually reflects your account's history and goals.

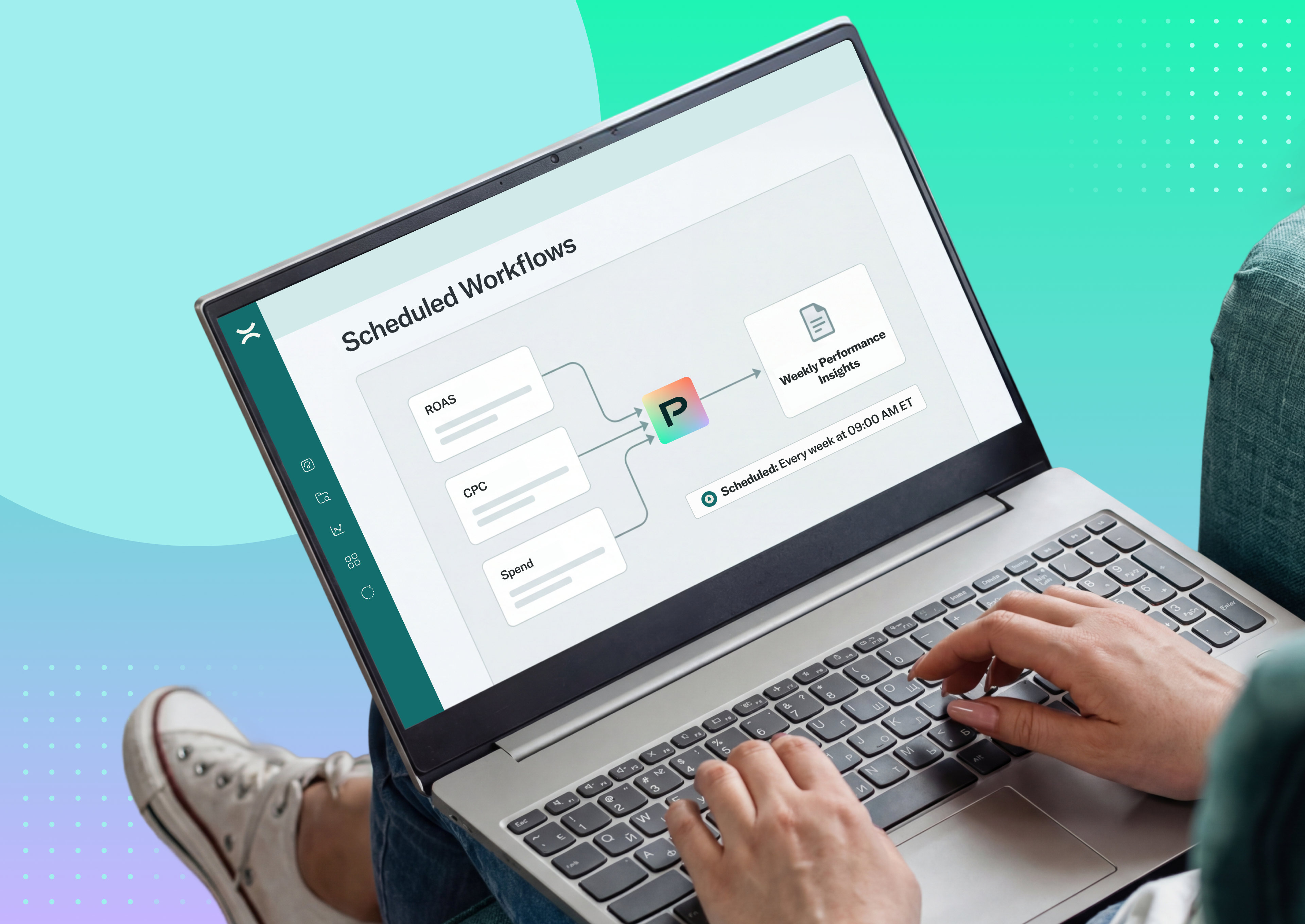

Within Prism, Scheduled Workflows is the feature that solves the recurring analysis problem directly. The premise is simple: define your prompts once, set a schedule, and Prism runs the analysis automatically — daily, weekly, monthly, or on a custom cadence — generating a new structured conversation with the results each time.

The shift this enables isn't just operational. It changes the default posture of the entire team. Instead of "we'll check that next week," it becomes "we've already reviewed it." That's what operational maturity looks like in a performance marketing context.

How Scheduled Workflows Are Built

Scheduled Workflows are structured around four capabilities that work together to replace the manual review cycle entirely.

Reliable Recurring Insights

The most basic failure in manual review processes is cadence — reviews that should happen weekly end up happening when someone remembers. Scheduled Workflows fix this at the root. Performance checks run at fixed daily, weekly, or monthly intervals with no dependency on manual reminders. Every review happens on time, regardless of team capacity, sprint cycles, or competing priorities. The cadence is the system, not someone's calendar discipline.

Consistent Analysis Framework

Consistency is what transforms individual reviews into a trend you can learn from. Scheduled Workflows enforce the same prompts on every run, apply fixed agent and ad account context throughout, and maintain standardized structure across every recurring review. The result is that week twelve's analysis is genuinely comparable to week one's — same lens, same logic, same structure. This is what makes it possible to build institutional memory rather than just a pile of one-off reports.

Flexible Workflow Design

Not every review needs the same depth. Scheduled Workflows support single-prompt workflows for quick daily monitoring — budget pacing, ROAS deviation, CPC movement — and multi-step workflows for deeper analysis that needs to unfold in a logical sequence. Optional wait times between steps mean you can model the kind of structured review logic that mirrors how a thorough analyst actually thinks through a complex account: start with the top-line picture, then drill into creative performance, then examine audience efficiency, then close with automated rule behavior. The workflow is designed once, and it runs exactly that way every time.

Explore Instantly

Each time a workflow executes, it generates a new conversation accessible instantly from execution history. This matters more than it might seem. It means you're not just receiving a static report — you can review the results, ask follow-up questions, and go deeper on anything that surfaces. And because the full history of executions is available, you can compare how the same workflow read the account last week versus this week, or last quarter versus this one, without rebuilding any context from scratch.

Where Scheduled Workflows Have the Most Operational Impact

Daily Account Health Monitoring

The gap between when a problem first appears in account data and when someone notices it is one of the most expensive gaps in performance operations. In a manual review environment, that gap is often measured in days. An automated rule starts behaving unexpectedly on Monday. Someone catches it Thursday. You've spent three days at degraded efficiency with no visibility into the cause.

A daily scheduled workflow closes that gap. Pacing deviations, ROAS shifts, CPC spikes, and spend concentration anomalies surface within 24 hours. For high-spend accounts — where a single day at the wrong efficiency level can mean thousands of dollars misallocated — daily monitoring isn't a nice-to-have.

Weekly Performance Reviews

Weekly reviews fail most often not because of a lack of data, but because of a lack of consistent structure. They're rushed. They depend on whoever is available to prep them. And because they're inconsistent, they can't be compared meaningfully over time — twelve weeks of data with no reliable framework connecting them.

With Scheduled Workflows, the same structured review runs automatically every week. No reminders. No export dependency. Every team member works from the same analytical framework. Leadership sees standardized outputs they can actually compare across weeks and quarters. And the review becomes a starting point for strategic conversation, not a reporting task that has to happen before the conversation can start.

Monthly Spend and ROAS Summaries

Month-end reporting in most organizations is reactive by design. The month closes, the data is pulled, the summary is built, and leadership sees what happened 30 days ago. Scheduled Workflows change this dynamic by building the monthly picture in real time. Weekly summaries under the same template accumulate over the month — so by the time the month closes, you're not constructing a summary from scratch. You're reviewing four weeks of consistent data you've already seen developing. Month-end becomes cumulative rather than reactive.

Multi-Step Campaign Audits

A thorough campaign audit — covering performance trends, audience efficiency, placement breakdown, creative diagnostics, budget concentration, and cross-platform comparison — can take an experienced analyst three to four hours to build from scratch. Most teams run them quarterly at best, which means they're always operating with a 90-day-old picture of account health.

Multi-step Scheduled Workflows automate this sequence. The audit structure is defined once and runs on cadence. Instead of rebuilding it each time, the team receives a consistent, structured audit output on whatever schedule makes sense for the account. Time shifts from repetition to optimization. Audit frequency can increase without a corresponding increase in analyst hours.

What Scheduled Workflows Don't Replace

Scheduled Workflows don't automatically change budgets or bids. They don't replace strategic judgment about where to invest or when to pull back. They don't execute platform-level changes.

What they do is ensure the analysis layer never falls behind the decision layer. They surface what's happening, reliably and consistently, so that the humans making strategic decisions always have current, structured information to work from. The goal isn't to remove judgment from performance marketing — it's to give judgment better inputs, more consistently.

The Compounding Effect of Missed Reviews

It's easy to underestimate the cost of a skipped review. One missed daily check. One rushed weekly summary. One quarterly audit that got pushed because the team was heads-down on a launch.

Each individual miss feels small. But the cumulative effect compounds. The number of days with undetected anomalies increases. The number of strategic decisions made on stale information increases. Performance volatility creeps up consistently, and the team starts spending more time on reactive firefighting and less on proactive optimization. By the time someone identifies the pattern, months of compounded inefficiency have already accumulated.

Scheduled Workflows reduce that entropy. They institutionalize the consistency that makes improvement possible. And in performance marketing, consistency is what makes scale sustainable.

Recurring analysis runs automatically. No dependency on manual follow-ups. Consistent cadence across teams. Time shifted from repetition to optimization.

Stop repeating the same analysis. Schedule it once. Let Prism run it.